Over the last three months, we've interviewed more than 50 fake candidates. Not by accident. On purpose.

This is what we documented inside those interviews. It's also why catching this kind of fraud at the interview stage is already too late.

How we started interviewing fake candidates

Earlier this year we opened a Lead Platform Engineer role. Getting there, however, took longer than expected, and not for the reasons you'd assume.

The role was real and the search was active. Hundreds of applications came in within the first weeks. When we ran them through Brainner's identity check at the application stage, several profiles came back flagged as high-risk. That on its own wasn't surprising. What we did next was less standard.

Instead of archiving those flagged candidates, we decided to interview a handful of them. The reasoning was simple: we wanted to see whether the signals Brainner surfaces at the application stage actually correspond to fake candidates in practice, and we wanted to understand how these operations work from the inside. What do they say? How do they respond under pressure? Where does the script break down?

Over the following weeks, that initial sample grew. By the end of the three-month window we had documented more than 50 of these interviews. Everything below comes out of that exercise.

We shared a few of the patterns we were seeing on LinkedIn. The conversation that followed confirmed what we suspected: the same patterns are showing up in recruiting teams across the US, EMEA, and LATAM.

Below is what came out of three months of running these interviews.

Before you read the list

None of these signals work in isolation. A delayed answer can be nerves. A blurred background can be a messy room. Fluency drops can be a real bilingual candidate having an off day.

What identifies a fake candidate is the accumulation. Five or six of these in the same call, or a wave of applications that all arrived the moment you posted a remote role: that's the pattern.

A note on what this article covers. Not every fake candidate is the same. Some are individuals who exaggerate or invent parts of their resume to land a job. That category exists, but it's not what we're focused on here. This article documents what we observed when interviewing candidates flagged as part of coordinated, organized operations: groups running AI-generated resumes, proxy candidates, and identity fraud at scale. There are at least 4 distinct types of fake applicants, and the signals below apply primarily to the most sophisticated three.

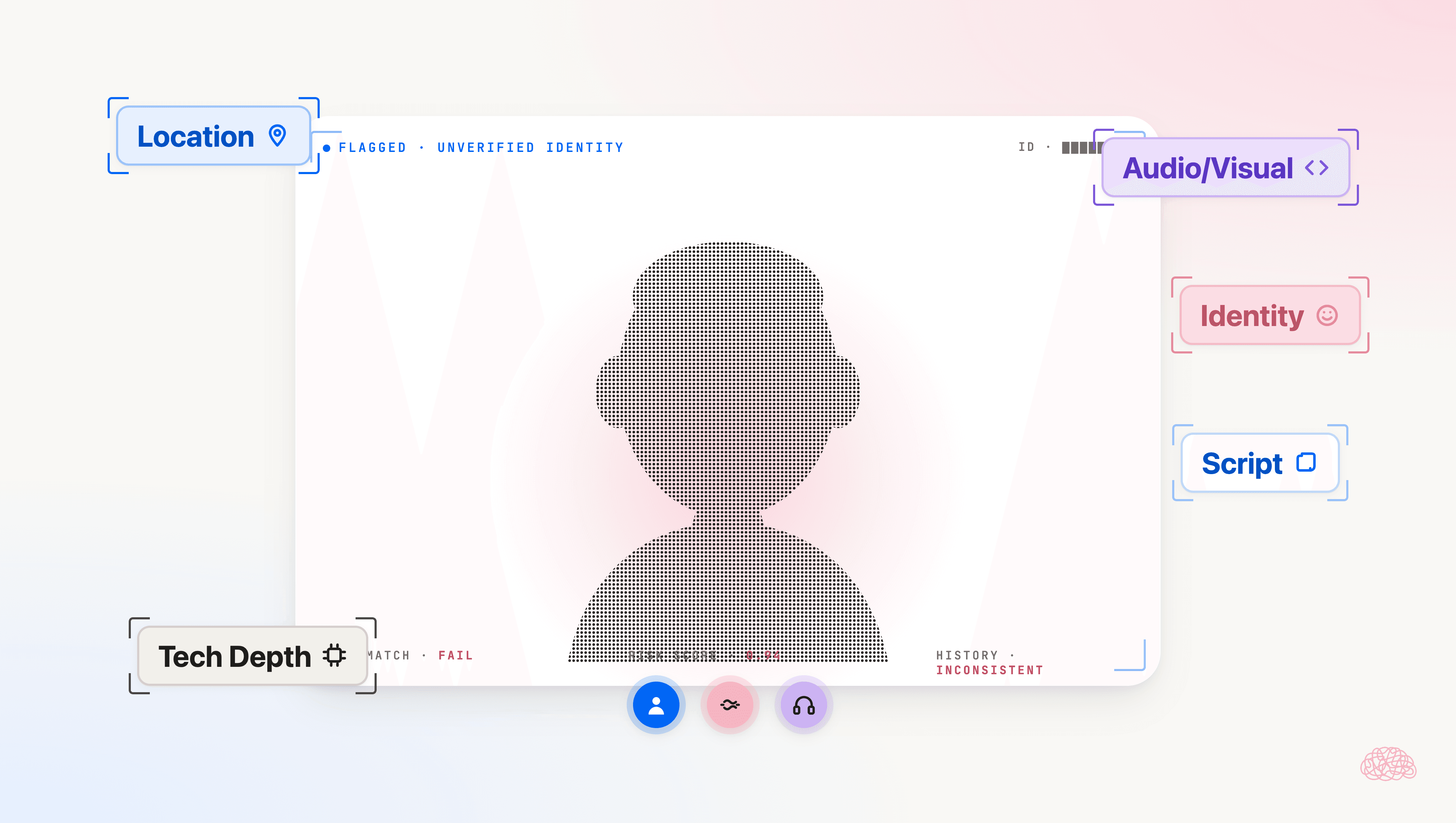

A second note on what we're publishing. The signals below are observational: things a recruiter can see, hear, or notice during an interview. They're useful precisely because they don't depend on tooling. The technical signals we use inside Brainner to flag these candidates at the application stage (IP and device-level patterns, identity cross-references against fraud network databases, cross-application pattern matching) are not in this article. Those are the ones organized operations would adapt to first if we made them public, which is why they stay between us and our customers.

Category 1: Location inconsistencies

The most common red flag. The candidate's resume says they're in a specific US state. The interview tells a different story.

"US-based" candidates often struggle with fluent English. The script holds up while the questions are predictable. Fluency breaks down the moment the conversation gets casual.

Other location signals to watch for:

- Everyone "moved during COVID" to Atlanta, Florida, or Texas to work remotely. The state isn't the flag. The pattern across a wave of applications is.

- The time they tell you doesn't match where they claim to be sitting.

- Calendly confirmations showing an overseas time zone. Easy to miss until you start checking.

- Vague answers when asked about the city they claim to live in. Fails on local-knowledge questions.

A trick that works: when you test local knowledge, don't name the city in your question. Ask about "the city you're in" instead of naming it. If they're being coached by a system, a named city is easier to feed than an open prompt.

We first documented this pattern on roles posted from the US and Canada. We're now seeing it in Europe and LATAM too. The operations are adapting: some are now running in Spanish and Portuguese, which makes detection harder but doesn't change the underlying signals.

Category 2: Audio and visual setup

Blurred backgrounds and headsets in combination. The combination is the signal, not either one alone.

If you ask for the blur and headset to be removed and they refuse or stall, you have your answer. If they comply, ask them to cover their face with their hand for a second. A real person moves normally. A deepfake glitches.

Other audio and visual flags:

- Strange background noises. Sometimes it sounds like a call center.

- Lips not in sync with voice. Common with deepfake or avatar technology, and a strong indicator of a proxy candidate operating behind a synthetic face.

Category 3: The script breaks down

Identical answer structure across questions, like an LLM is behind it. The same shape that gives away AI-generated resumes shows up in AI-generated answers under pressure. Small but consistent delays between question and answer. Eyes drifting off-screen mid-answer, which usually means they're being coached in real time.

Other signals in this category:

- Drops from the call when pressed with follow-up questions. Never rejoins. Never follows up by email.

- Ask them to answer with eyes closed. The video doesn't match the voice behavior, and the script crumbles.

- Politically correct, overly polished answers that sound scripted from a CEO's keynote. No real opinions, no rough edges.

- Ask them if they'd be open to an on-site visit or coming into the office once a month. A genuine remote candidate usually has a clear answer. Hesitation or deflection here is worth noting.

Category 4: Identity and background

The candidate can't name their manager from previous roles. And yes, they've all worked at Stripe, Amazon, Airbnb, or Tesla.

A different person sometimes appears in a later interview round. This is one of the clearest signs of a proxy interviewer setup: one person handles the early screen, another handles the technical round. The pattern is striking when you compare screenshots between rounds: the face on round one isn't the face on round three.

The name on the application belongs to a real person on LinkedIn, but that person has nothing to do with the role or the industry. Sometimes the LinkedIn profile is fully fabricated: no photo, fewer than 10 connections, created within the last six months. This is where identity verification becomes critical. The resume can be polished, the LinkedIn URL can look right, but the actual identity behind the application doesn't hold up to cross-checks.

Category 5: Technical depth

The candidate lists a tech stack matching the job description perfectly. Every tool, every framework, every cloud provider, all aligned. Then you ask why.

They're quick to list the stack. They can't explain trade-offs. They can't tell you why those tools instead of alternatives. They can't talk through a real engineering decision. The depth isn't there because the experience isn't there. The resume is a lookup, not a story.

There's more than one type of fake candidate

As we noted at the start, this article focuses on coordinated, organized fraud rather than individuals padding their resumes. Within that scope, there are still distinct profiles. Some are coordinated groups running AI-generated resumes at scale. Some are state-sponsored. And some, the most uncomfortable category to think about, are real people fronting for someone else.

That last category is the Frontman: a real person who applies and interviews under their own face and name, then hands the actual job to a proxy candidate once hired. It's run at scale by groups that present a single trained engineer with every certificate, a European education, and C2 English, while the day-to-day work is done by someone else entirely. The company pays for months before catching it.

We've broken down the full taxonomy in 4 Types of Fake Applicants.

The real problem is what happens before the interview

As a recruiter, spotting most of this during an interview isn't that hard once you know what to look for.

The problem is everything that happens before that:

1. Reading the resume

2. Scheduling the interview

3. Running the call

That's time your team will never get back. Time spent on a candidate who shouldn't have been in the pipeline in the first place.

Fraud rarely shows up in a single signal. It's a consistency problem. What flags risk is misalignment across LinkedIn, CV, references, timezone or IP versus claimed location, shallow technical depth when you dig, and too-clean repeated career narratives.

For a deeper breakdown of what to look for at the application stage, read How to detect fake applicants: best practices and How to detect fake applicants without slowing down your pipeline.

Why this can't be done manually at scale

Some of the signals above can be caught by a sharp recruiter in 5 to 10 minutes per candidate. That works when you have 15 applicants for a role.

It doesn't work when you have 500.

It also doesn't work when the signals are technical: IP address inconsistencies, device fingerprints, duplicate application patterns across multiple roles, identity records cross-referenced against fraud network databases. Those checks are not realistic to run manually, on every applicant, every time a role opens.

There's a second problem with the manual approach: the operations adapt. The patterns published in articles like this one get engineered around within weeks. A signal that works today gets defeated tomorrow. A static list of red flags has a shelf life.

That's the gap that gets exploited. The signals are there. The capacity to check them, at the speed and scale modern hiring requires, isn't.

How Brainner catches this before the interview

Brainner is a candidate fraud detection platform that flags high-risk applicants the moment they apply, before any recruiter opens a profile. Every application that comes in through your ATS is cross-referenced against 3.5 billion data points and evaluated against 20+ fraud patterns covering identity, behavior, and resume consistency. When the accumulation hits a threshold, Brainner surfaces a High Risk flag with the specific signals that triggered the alert.

Identity verification sits at the core of how Brainner detects fake candidates. The platform runs an applicant identity check across public records, professional networks, and proprietary fraud signal databases. AI-generated resumes are flagged through pattern recognition trained on millions of analyzed profiles. Proxy candidates and coordinated application campaigns are caught through cross-application analysis: the same template, the same writing patterns, the same identity inconsistencies showing up across multiple roles or multiple applicants.

The flag is a signal, not a verdict. Your recruiters stay in control of every decision. But the candidates who make it to your interview stage are worth your time. The ones that aren't never get there.

Brainner integrates with Greenhouse, Lever, Workday, Workable, Recruitee, Zoho Recruit, iCIMS, JazzHR, Ashby, SmartRecruiters, and BambooHR through bidirectional sync, so the risk signals show up where your team already works.

Get the full guide

We documented 20 signals across 5 categories in a guide you can download and share with your team. It includes the location, audio and visual, script, identity, and technical-depth signals above, plus the pre-call indicators visible at the application stage.

If you want to see how Brainner flags this kind of operation before it reaches your pipeline, request a demo.

FAQs

Brainner detects fake applicants by analyzing every incoming application against 20+ fraud patterns covering identity, behavior, and resume consistency. The platform cross-references each candidate against 3.5 billion data points, including public records, professional networks, and proprietary fraud signal databases, and surfaces a High Risk flag with the specific signals that triggered the alert. Brainner operates at the application stage, before any recruiter opens a profile, so coordinated operations are caught before they consume interview time. Recruiters review each flag and decide whether to advance or archive. See how Brainner's fraud detection works.

A candidate fraud detection tool should cover three capabilities: identity verification at the application stage, AI-generated resume detection, and native integration with your ATS so high-risk candidates are flagged inside the workflow recruiters already use. It should also handle volume without slowing the pipeline, since manual fraud checks aren't realistic when a remote role attracts hundreds or thousands of applicants. Brainner is built around those three capabilities and covers the full range of fraud profiles, from AI-generated resumes to proxy candidates and coordinated identity fraud operations. See how Brainner's fraud detection works.

Brainner integrates natively with Greenhouse, Lever, Workday, Workable, Recruitee, Zoho Recruit, iCIMS, JazzHR, Ashby, SmartRecruiters, and BambooHR through bidirectional sync. When a new application enters your ATS, Brainner analyzes it in real time and writes the High Risk flag back into the candidate record inside your ATS, so recruiters see the risk signal in the system they already work in. No separate dashboard, no extra steps. Risky candidates are flagged before the interview is scheduled. For a deeper look at how this fits an existing pipeline, read How to detect fake applicants without slowing down your pipeline.

Brainner is SOC 2 Type II certified, GDPR compliant, and CCPA compliant. Data is processed inside the same security perimeter you've already approved for your ATS, and Brainner does not store candidate data beyond the operational window required for screening. For enterprise and RPO buyers running compliance-sensitive procurement, this means Brainner's fraud detection can be deployed without expanding your regulatory footprint.

Brainner is used by teams hiring across a wide range of industries, including fintech and financial services, staffing and RPO, healthcare and biotech, SaaS and software, retail and e-commerce, energy and manufacturing, crypto and Web3, edtech, legal tech, gaming and entertainment, cybersecurity, and consulting. The common thread is exposure: any company posting remote tech roles (Software Engineer, Data Analyst, QA, DevOps, Security Engineer, etc.) attracts coordinated fraud campaigns, regardless of sector. Industries with regulatory pressure, such as healthcare, financial services, and crypto, face additional risk from compliance and data sensitivity, which makes early-stage fraud detection particularly important. Brainner flags high-risk applicants automatically at the moment of application, so recruiters can bulk-archive coordinated fraud campaigns instead of reviewing each profile manually. Request a demo to see Brainner flag fraudulent applicants on your live ATS data.

Continue reading

How IMO Health Screens Thousands of Healthcare IT Applicants and Catches Fake Ones

IMO Health's TA team screens thousands of healthcare IT applicants per role with Brainner. See how Lauren Fisher surfaces top candidates and catches fake applicants.

How Fraud Detection Works Alongside Your ATS

Brainner integrates with Greenhouse, Workday, Lever, and other ATS platforms to add AI screening and fraud detection. Here's how the integration works.

Save up to 40 hours per month

HR professionals using Brainner to screen candidates are saving up to five days on manual resume reviews.